I’ve always been interested in how teams innovate when building AI. At AI Engineer Europe 2026, the talks that stayed with me weren’t the ones about bigger models or faster demos. They were the ones where teams hit a wall, realized their first approach wasn’t working, and changed the architecture.

These reframing examples stood out to me. They’re stories about changing the unit of work, changing the control surface, or changing what “good” looks like.

1. Cursor: Stop Hardcoding the Orchestrator

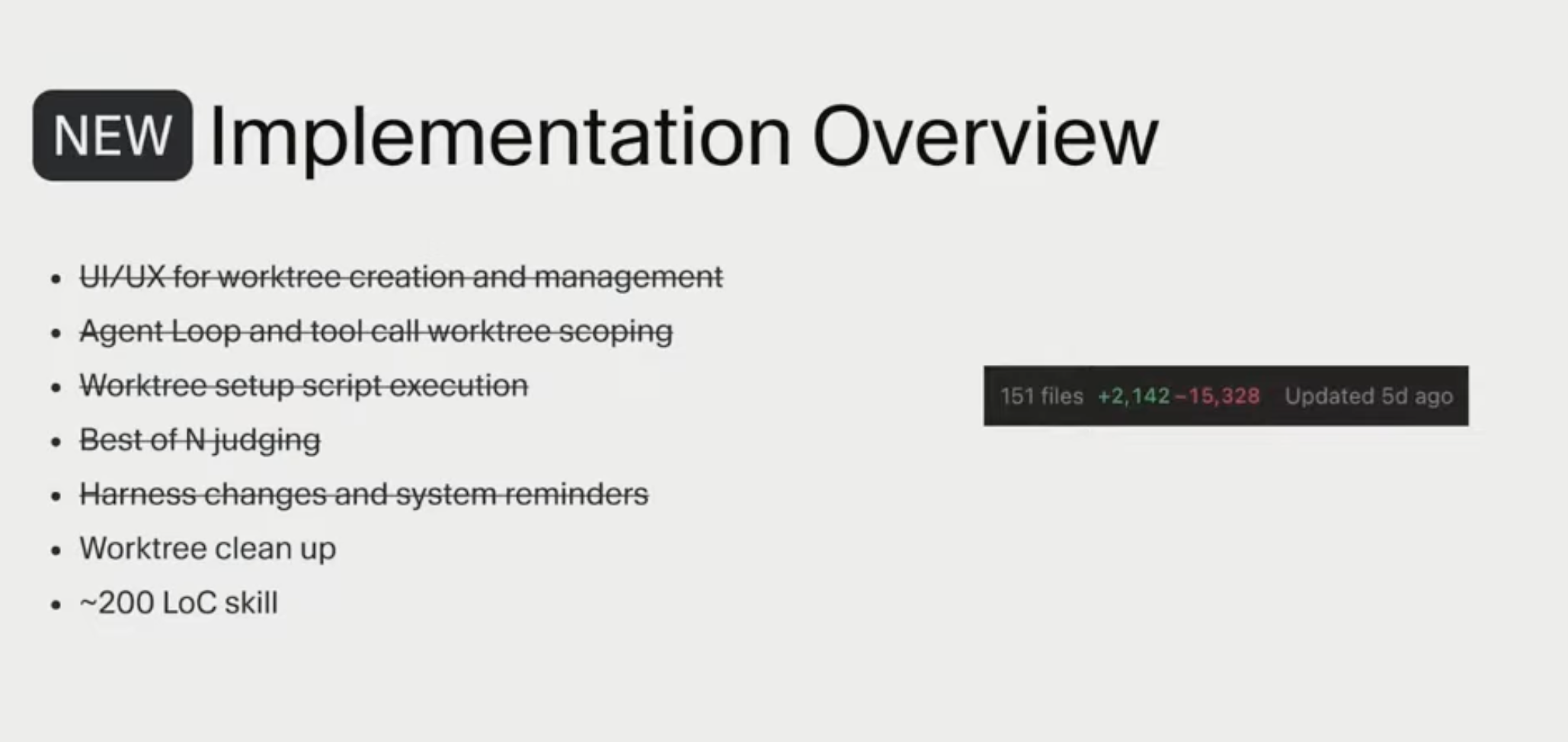

David Gomes described how Cursor had accumulated roughly 15,000 lines of orchestration logic to manage Git worktrees and agent flows. That’s a very normal engineering move: if coordination is hard, build a control plane in code. But Cursor found that this layer had become brittle, heavy, and expensive to maintain. At that point, it made sense to step back and change the approach.

They landed on skills, which let them replace that large orchestration layer with roughly a 200-line skill. Instead of asking, “How do we build software to micromanage the agent?”, Cursor shifted to a better question: what’s the minimum structure the agent needs to operate safely?

The old focus was application code. The new twist was using a lightweight skill that told the agent how to behave. The result wasn’t just less code. It was a system that was easier to maintain and better aligned with how the agent actually works.

Cursor’s new implementation: 15k lines deleted, replaced by a ~200 line skill

Cursor’s new implementation: 15k lines deleted, replaced by a ~200 line skill

2. Cloudflare: Stop Using the Model as a Runtime

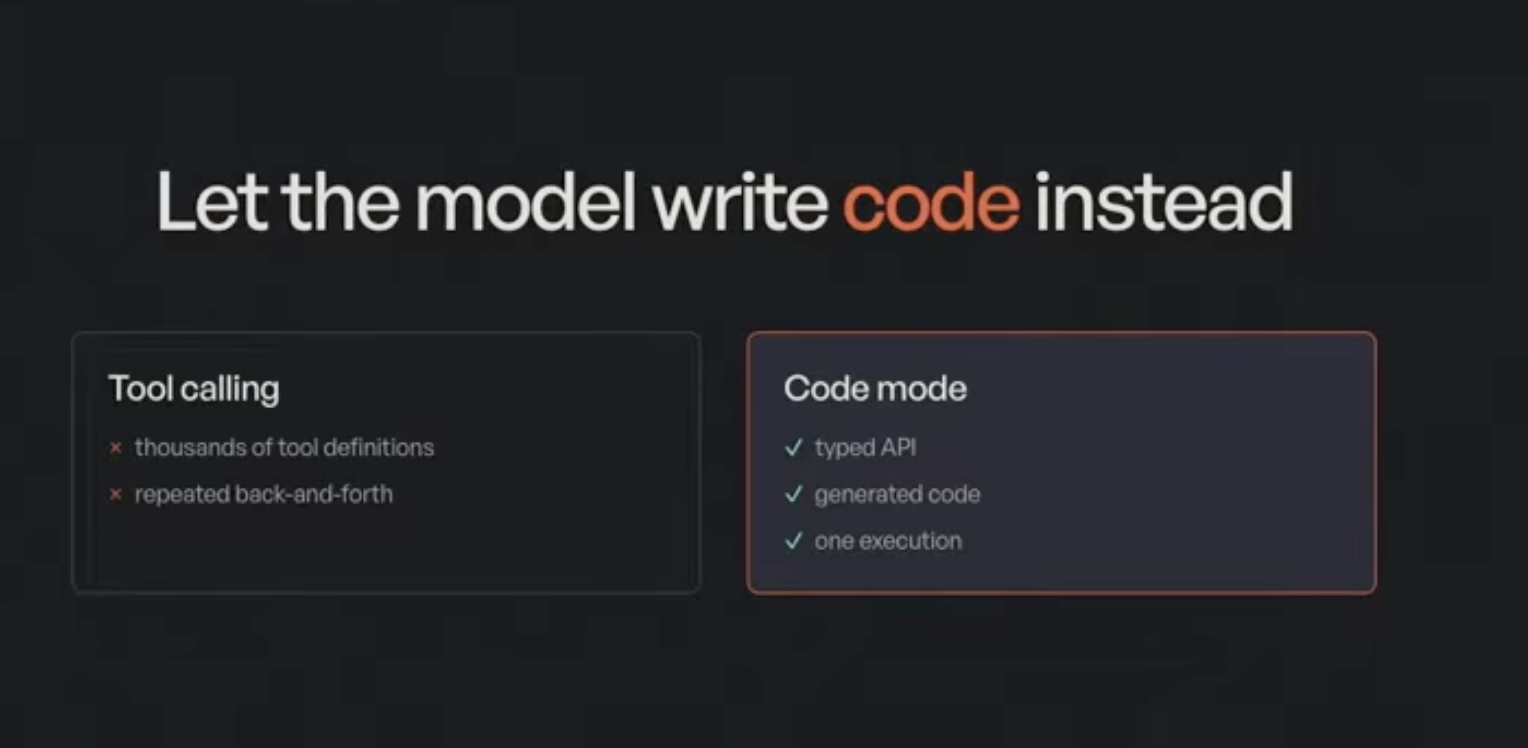

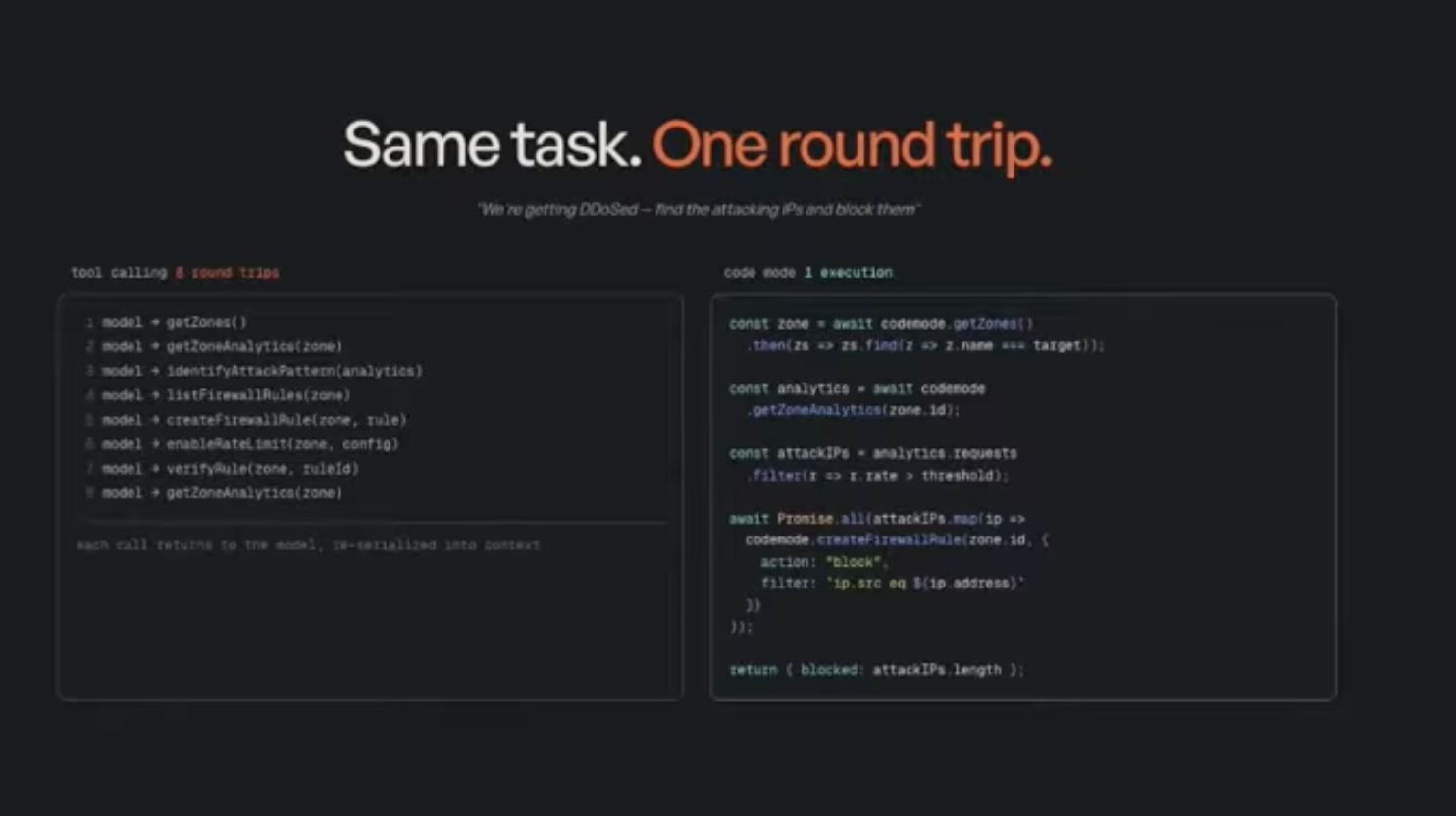

Sunil Pai described a different but related failure mode. If you expose a huge API surface to an agent through standard tool schemas, the system eventually collapses under its own interface. Cloudflare has thousands of endpoints. Representing them as conventional tools consumed around 1.2 million tokens per request.

Even worse, JSON tool calling is a poor execution model for deterministic workflows. If the task contains loops, filtering, or repeated operations, the agent ends up paying LLM latency for what’s basically ordinary program control flow.

Those signals around latency, context bloat, and complexity pushed Cloudflare to rethink the architecture.

Their answer was “Code Mode.” Instead of doing step-by-step tool calls, the model generates a complete JavaScript program and Cloudflare runs that code inside a V8 isolate. They’re still using a model, but they’re no longer using the model as the runtime for every loop and branch. The model plans once, writes the code, and the system executes it directly.

The question is no longer, “How do we make tool calling faster?” It becomes, “Which parts of this task actually require model reasoning, and which parts should just execute as code?”

Once Cloudflare looked at it that way, the architecture became much cleaner. The same task that used to require repeated back-and-forth could now run in one execution.

Tool calling vs. Code Mode: thousands of tool definitions and repeated back-and-forth replaced by a typed API and one execution

Tool calling vs. Code Mode: thousands of tool definitions and repeated back-and-forth replaced by a typed API and one execution

Same task, one round trip, the model writes a complete program instead of making sequential tool calls

Same task, one round trip, the model writes a complete program instead of making sequential tool calls

3. Factory: Validation Has to Exist Before the Agent Writes Code

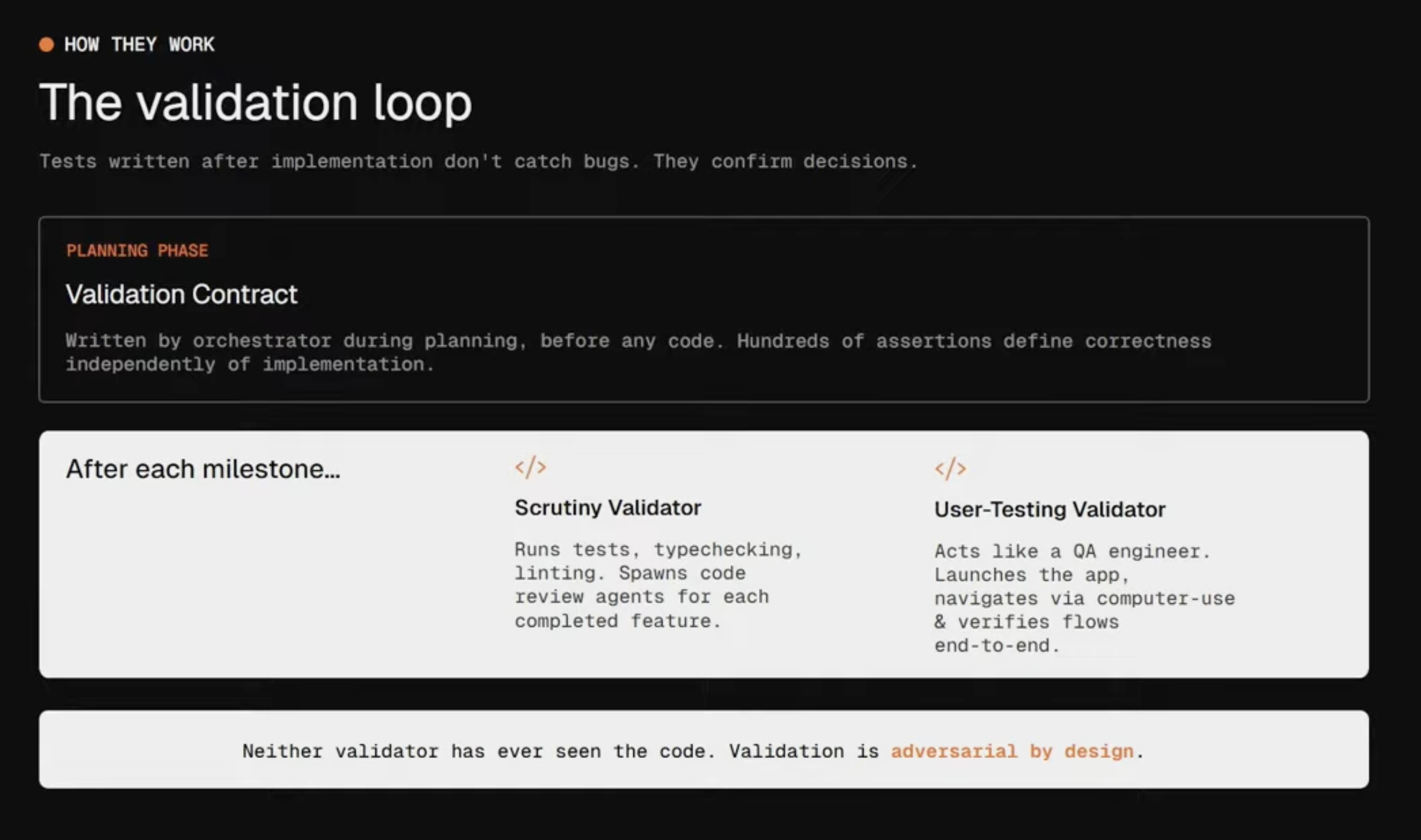

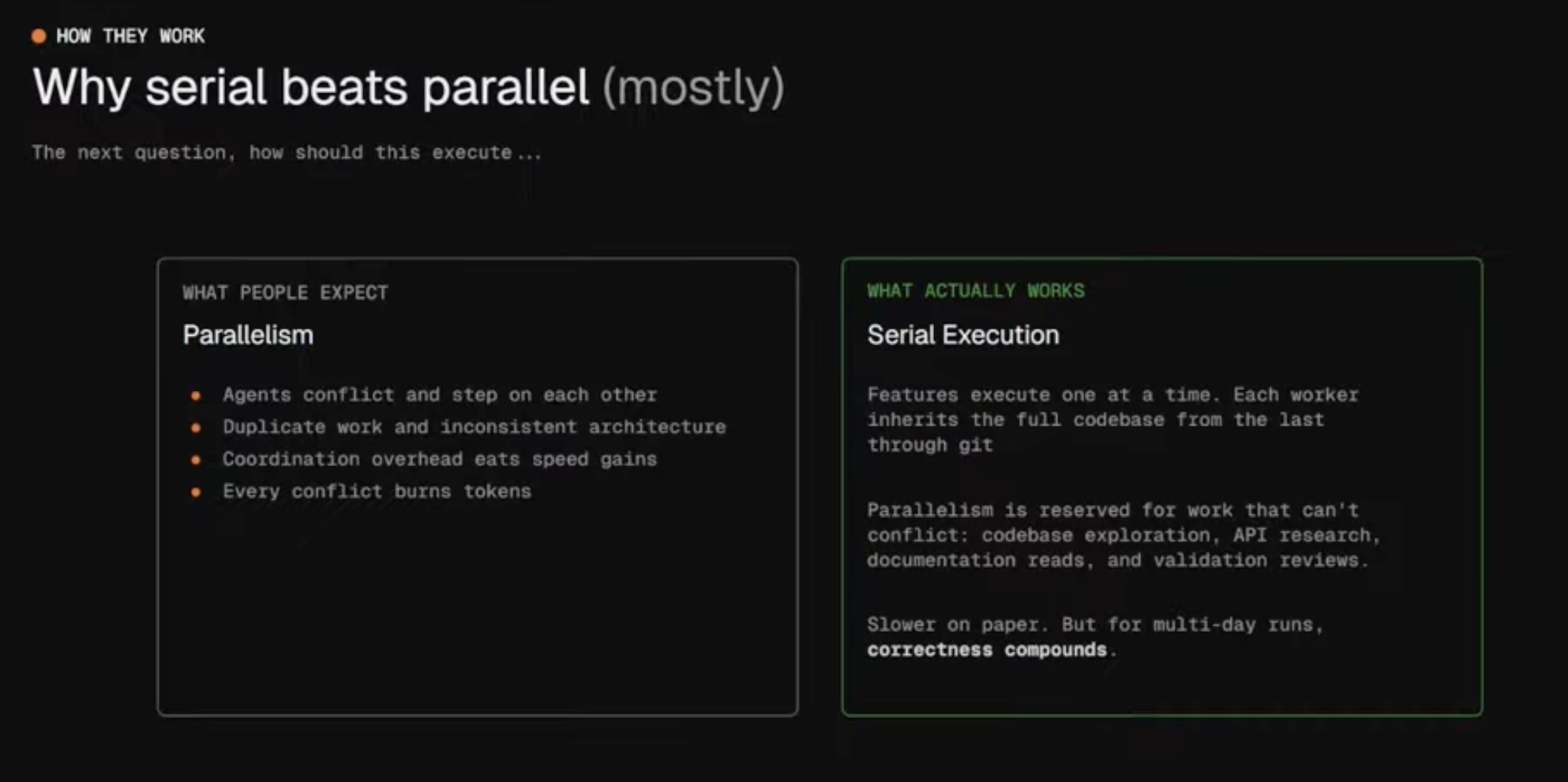

Luke Alvoeiro’s talk at Factory tackled another common instinct: if one agent is useful, then many agents in parallel should be better. That sounds reasonable. It’s also how a lot of agent systems break.

Factory found that unconstrained parallelism created duplicated work, conflicts, and drift over long-running missions. More importantly, they found a deeper problem with validation. If an agent writes the feature and then writes the tests, the tests aren’t independent evidence anymore. They mostly confirm the agent’s own decisions.

The issue here wasn’t just poor output quality. It was that correctness itself had become circular. If tests are written after implementation, the system is often checking whether the agent was consistent with itself, not whether the result was actually right. Instead of only refining evals, Factory changed the structure of the system.

Factory added more structure by moving to an Orchestrator -> Worker -> Validator architecture and, crucially, defining a validation contract before implementation began. That changes testing from “post hoc confirmation” to an independent adversarial check on the work.

This changed both the architecture and the evaluation strategy. It also led them away from the default assumption that more parallelism is always better. In software development, they found that serialized execution often beats parallel execution because correctness compounds over long-running missions.

Factory’s validation loop: contracts are written during planning, and validators never see the implementation code

Factory’s validation loop: contracts are written during planning, and validators never see the implementation code

Why serial beats parallel: for multi-day missions, correctness compounds when workers execute one at a time

Why serial beats parallel: for multi-day missions, correctness compounds when workers execute one at a time

4. OpenAI, Comet, and Others: The Engineer’s Job Has Been Reframed

The biggest shift from the conference may be the one that applies to all of us. Several speakers argued that we need to stop centering the human-written line of code and start centering the systems, constraints, and standards that shape what agents produce.

Ryan Lopopolo from OpenAI said it directly: code is free. Vincent Koc described “dark factories” with dozens of parallel agent swim lanes. Matt Pocock argued that without design constraints, you get AI slop. Armin Ronacher and Cristina Poncela Cubeiro emphasized that agent-friendly codebases need explicit friction, not total freedom.

Taken together, these talks point to a different definition of engineering work. The role shifts from “writing the logic” toward:

- defining the shape of the system

- encoding taste in tests, linters, and error messages

- deciding what the agent is allowed to do without approval

- building the constraints that keep fast generation from turning into fast decay

This is where the idea of “taste” becomes useful. Taste here isn’t aesthetics. It’s the ability to encode non-obvious constraints before the system scales and before low-quality patterns spread through the codebase. In that sense, the senior job shifts away from pure implementation and toward setting direction and constraints.

OpenAI’s Ryan Lopopolo: engineering work shifts to systems, scaffolding, and leverage

OpenAI’s Ryan Lopopolo: engineering work shifts to systems, scaffolding, and leverage

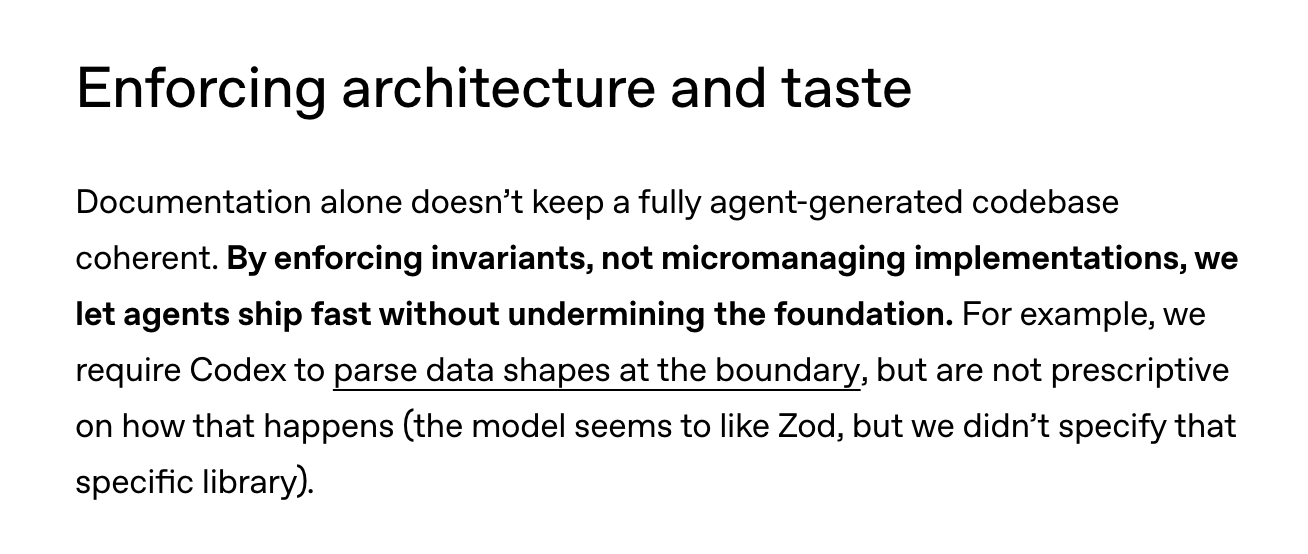

Enforcing invariants rather than micromanaging implementations — let agents ship fast within guardrails, from Harness Engineering

Enforcing invariants rather than micromanaging implementations — let agents ship fast within guardrails, from Harness Engineering

The Practical Lesson for Anyone Building Agents

The thread running through all of these talks is that the teams who made progress weren’t the ones who optimized harder within their existing approach. They were the ones who stepped back and asked whether they were solving the right problem. That’s the skill I keep coming back to in my own work. Learning to recognize when you’re stuck because the framing is wrong, not the implementation.

I teach a course on exactly this: how to reframe AI problems so you’re not just building faster, but building the right thing. If that resonates, you can find more about me and the course here.